More than half of new English-language articles on the internet are not written by people. Not a forecast. Graphite analyzed 65,000 URLs from Common Crawl and got a clean number on it. These articles weren't edited by a neural network or "enhanced" with AI. They were generated from scratch. Before ChatGPT launched, machine-written text made up roughly five percent of online content. In under three years, that number grew tenfold.

But who writes the content is only part of it. Who reads it matters just as much. Imperva's 2025 report found that automated traffic surpassed human traffic for the first time in a decade. More than half of all requests to websites now come from bots.

Half the content online is machine-made, and half the traffic comes from machines. Humans are becoming the minority on the internet, both as writers and as readers.

What the Research Shows About AI Content at 50%

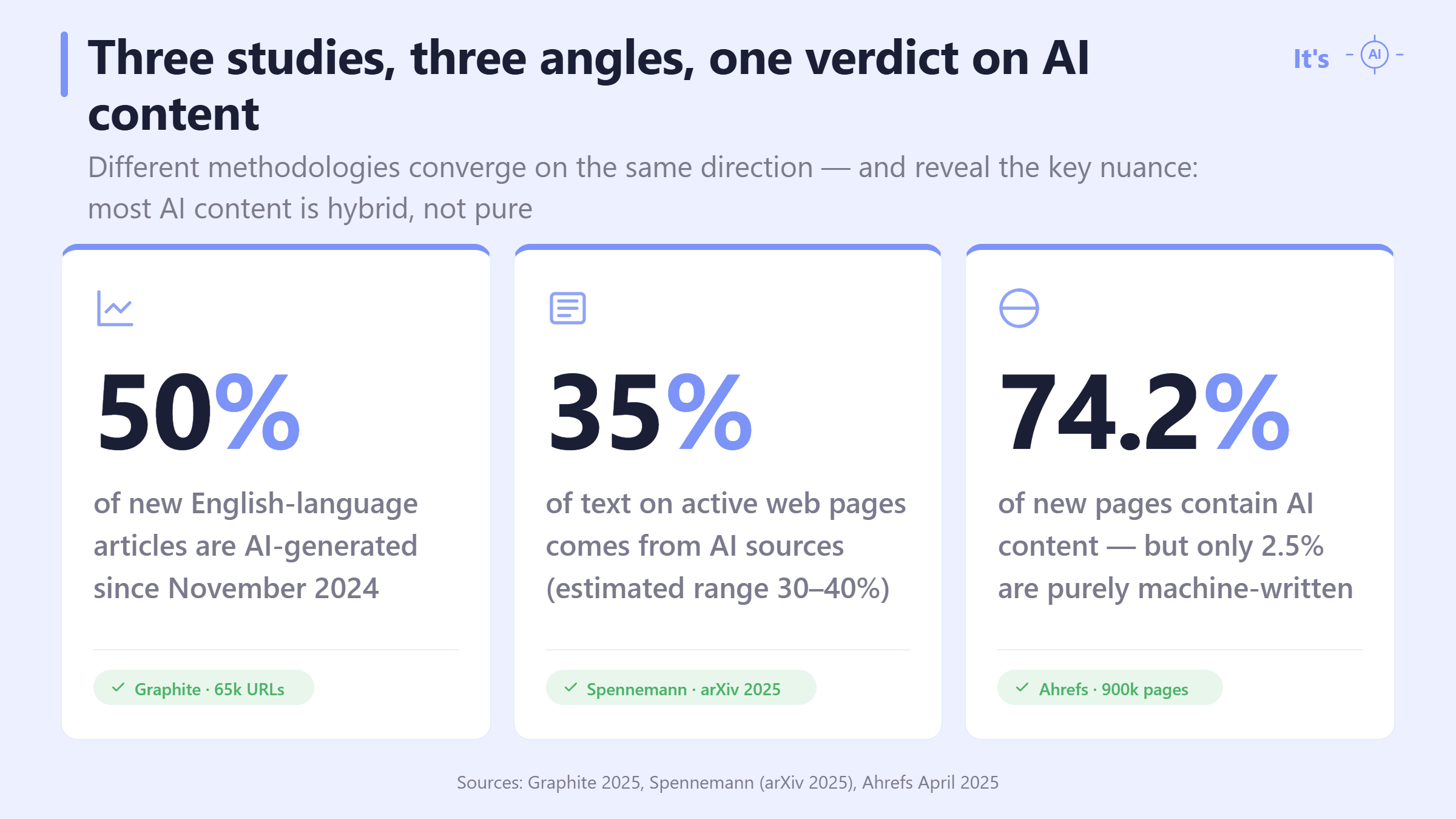

This isn't a single-study claim. Several independent studies with different methodologies point in the same direction, though their numbers measure slightly different things.

The three studies don't measure the same thing — and that's what makes their agreement informative. Graphite counts articles in Common Crawl. Spennemann on arXiv samples text across active web pages. Ahrefs looks at brand-new pages and checks whether any AI touched them. The methodologies differ; the conclusion doesn't.

Ahrefs' breakdown changes how to read the others. Pure AI content is the small slice. The vast majority of AI content on the web is mixed with human writing: a person edits a generated draft, or a generator extends a person's outline. Hybrid content doesn't look "written by a robot." It looks like a regular article, and that is exactly why it is harder to spot without an AI content detector.

Bots Now Outnumber Humans Online for the First Time in a Decade

Content is only part of the picture. The other part is who consumes it.

According to Imperva, bot traffic hit 51% of all website requests in 2024. The trend isn't slowing: malicious traffic alone has been climbing for six straight years, now accounting for over a third of all internet traffic.

For publishers and brands, this is a concrete practical problem. Traffic metrics are getting diluted. A thousand page views might mean three hundred actual readers. The rest were generated by scrapers and crawlers. When machines produce content and other machines consume it, it gets hard for a human to tell what's actually real in any of it.

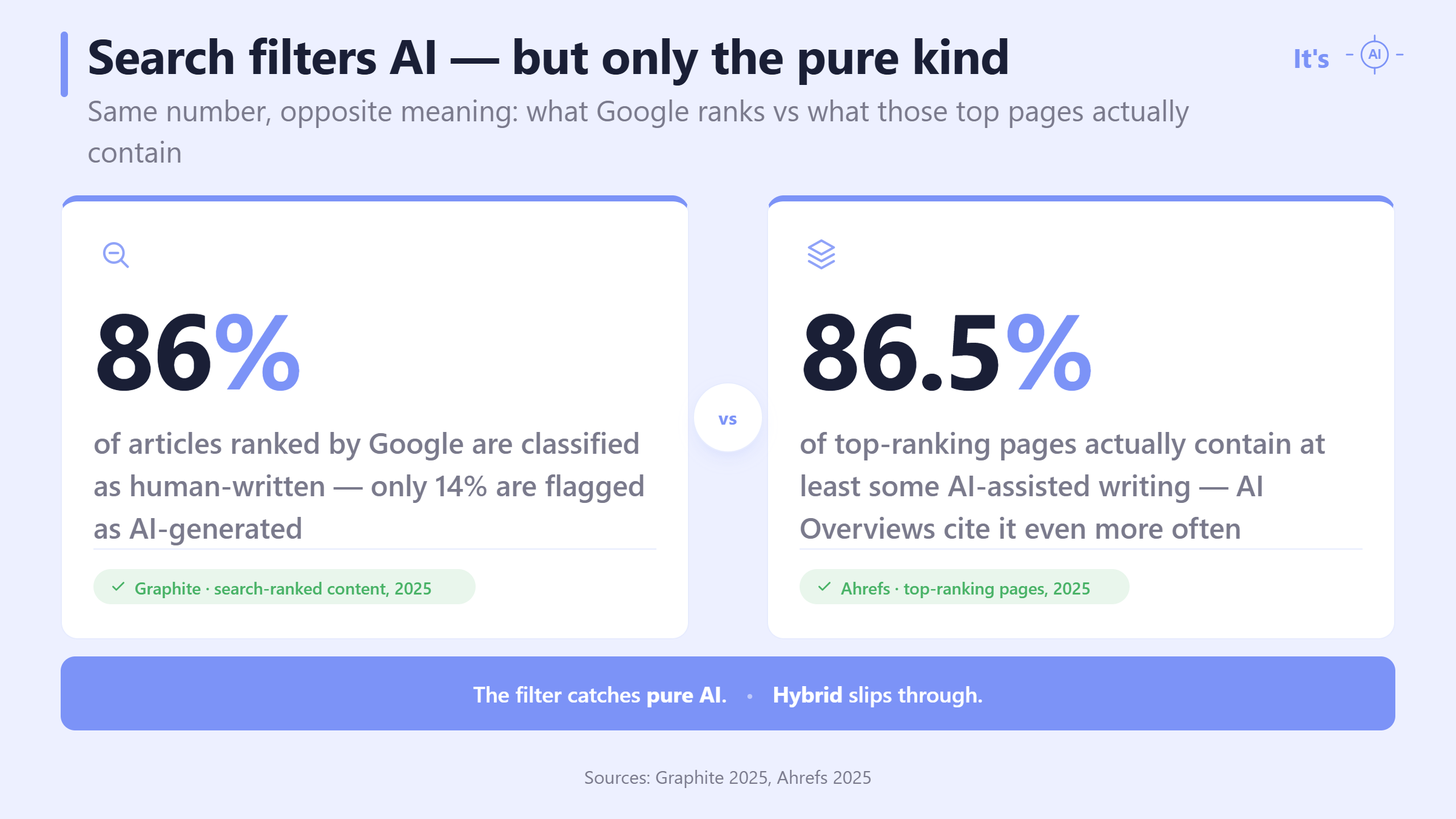

Search Engines Filter AI Out — But Only the Fully Synthetic Stuff

There's a detail in these numbers that's easy to miss. Despite the scale of AI content online, search algorithms handle filtering better than you might expect — for one kind of AI content.

Graphite and Ahrefs looked at the same Google top results from different angles. Graphite asked whether AI dominates the page; Ahrefs asked whether any AI is present at all. The numbers come back almost identical — and tell opposite stories.

For those who rank in the top results without crossing the "mostly AI" line, the algorithm still works — and ChatGPT and Perplexity behave similarly, citing human-written articles 82% of the time. Below the top 20 sit billions of pages with no filtering whatsoever. Social media, messengers, corporate blogs, email newsletters — all of that content reaches the reader directly, with no quality screen in between. And in those spaces, verifying whether something was written by AI falls entirely on the reader.

How a CEO's Video Forecast Became Europol's "90% by 2026"

A quick note on a number that keeps showing up in these discussions. "By 2026, 90% of internet content will be AI-generated."

The trail is shorter than it looks. The figure appears on page 5 of Europol's 2022 report "Facing Reality? Law Enforcement and the Challenge of Deepfakes", which stated that "experts estimate that as much as 90 percent of online content may be synthetically generated by 2026." Europol's "experts" turn out to be one expert: Victor Riparbelli, CEO of Synthesia, a company that sells synthetic media. The quote reached the report via Nina Schick's 2020 book "Deepfakes: The Coming Infocalypse," and Riparbelli's original framing was that synthetic video, not all online content, might reach 90% in three to five years.

So: one CEO's optimistic projection about video, broadened by Europol into "90% of online content," now circulates as a documented forecast. The figure has been widely criticized as overstated, and current research does not support it. The real numbers are serious enough on their own: 50–52% confirmed by multiple independent studies needs no inflated estimates.

Why AI Content Detection Is Now Basic Reading Hygiene

So where does this leave you?

For anyone who publishes text, it's straightforward: how do you show that your content was written by a human? For recruiters and editors it's different: how do you tell if something is written by AI when AI content makes up half the stream?

An AI content detector answers both. The It's AI detector works in both directions. Authors can check their text before publishing to make sure it won't be mistakenly flagged as machine-written. Editors and recruiters can check writing themselves to detect AI-generated text and see whether a human or a generator wrote it. AI content keeps growing, bot traffic with it. If you publish or edit online, AI content detection isn't a feature anymore — it's a daily check.

FAQ — AI Content Has Crossed 50%

How does an AI content checker actually work?

Early checkers relied on statistical signals — perplexity (how predictable each word is given the ones before it) and burstiness (how much sentence length varies). AI tends to produce low perplexity and steady, uniform rhythm. Human writing is less predictable and more uneven.

Modern detectors like It's AI use neural classifiers trained on stylometric features, model-specific fingerprints, and structural patterns that simple statistics can't catch. The output is usually a confidence score, not a yes/no verdict, because some signals overlap with formulaic human writing.

Is this written by AI? How to check without a detector

Watch for over-smooth transitions, generic examples, hedging phrases, and an unexplainable love for words like "delve," "comprehensive," "landscape," and a few others. That's the manual filter.

The method has real limits. AI models have gotten better at avoiding the obvious tells. And when a person edits an AI draft, the result looks almost the same as normal writing. Manual spotting works on rough first drafts from lazy users, not on polished text, which is what makes up most of the volume online.

Use the signs as a first-pass filter. For anything that matters, running the text through a dedicated tool is the more reliable move.

How do I check if text is AI-generated when someone sends it to me?

The fastest way to check if text is AI-generated is to paste it into a detector and read the paragraph-level output, not just the overall score. An overall 60% score on a 2,000-word piece doesn't tell you which parts were machine-written. The paragraph breakdown does.

A few practical moves. Run the text through two different tools when stakes are high, especially academic or hiring contexts. Look at the confidence level, not a binary verdict. If you suspect hybrid content, ask the author for drafts or version history. A person who wrote something can usually show how it evolved. A tool can't.

How reliable are AI content detection tools, and can they be wrong?

AI content detection tools can be wrong, and knowing where they fail matters as much as the accuracy claims. Good tools achieve strong accuracy on purely AI-generated text. Accuracy drops on hybrid content, where a person edits an AI draft, because the detector sees mixed signals and hedges.

False positives happen. The usual victims are non-native English writers and anyone whose style is naturally formulaic, including a lot of technical writing. If you get flagged wrong, the fix is process evidence like drafts or edit history. For high-stakes decisions, don't rely on a single tool's verdict. Two tools agreeing carries more weight.

Can I trust a checker for AI-generated content for high-stakes decisions like hiring?

A checker that flags AI-generated text gives you one signal, not a verdict. High-stakes decisions should never rest on a single signal. Use it the way an editor uses a spell-checker: input into the judgment, not a substitute for it.

If a candidate's cover letter scores high on AI detection, that's information, not evidence of dishonesty. Follow up. Ask about the writing process, or request a short written task done in a controlled setting. The AI-generated content checker narrows what to look at; the final read happens with a human in the loop. Rejecting someone on a tool's score alone creates more false rejections than it catches real problems.